“We have one in the lab, and in our test case we took a workload that's perfect for V100, one with more than 100 million parameters in large, fully connected networks that fit in GPU memory,” Shrivastava said. The current best GPU platform that companies like Amazon and Google use for cloud-based deep learning has eight Tesla V100s, and the price tag is around $100,000. This new approach greatly reduces the computational overhead for SLIDE. This workload requires the use of GPUs, so the researchers altered the neural network training so that it could be solved with hash tables. Currently, the standard training technique for deep neural networks is “back propagation,” and it requires matrix multiplication. SLIDE gets past the challenge of GPUs because of its completely different approach to deep learning. “Our tests show that SLIDE is the first smart algorithmic implementation of deep learning on CPU that can outperform GPU hardware acceleration on industry-scale recommendation datasets with large fully connected architectures,” said Shrivastava. The development of SLIDE opens up entirely new possibilities.Īnshumali Shrivastava is an assistant professor in Rice’s Brown School of Engineering and helped invent SLIDE with graduate students Beidi Chen and Tharun Medini. Nvidia recently reported a 41% increase in its fourth-quarter revenues compared to last year. One such company is Nvidia, which creates the Tesla V100 Tensor Core GPUs.

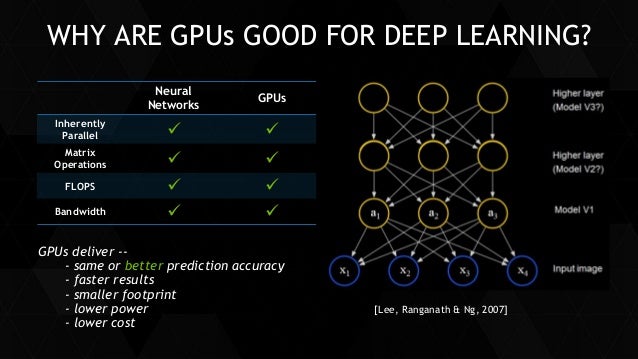

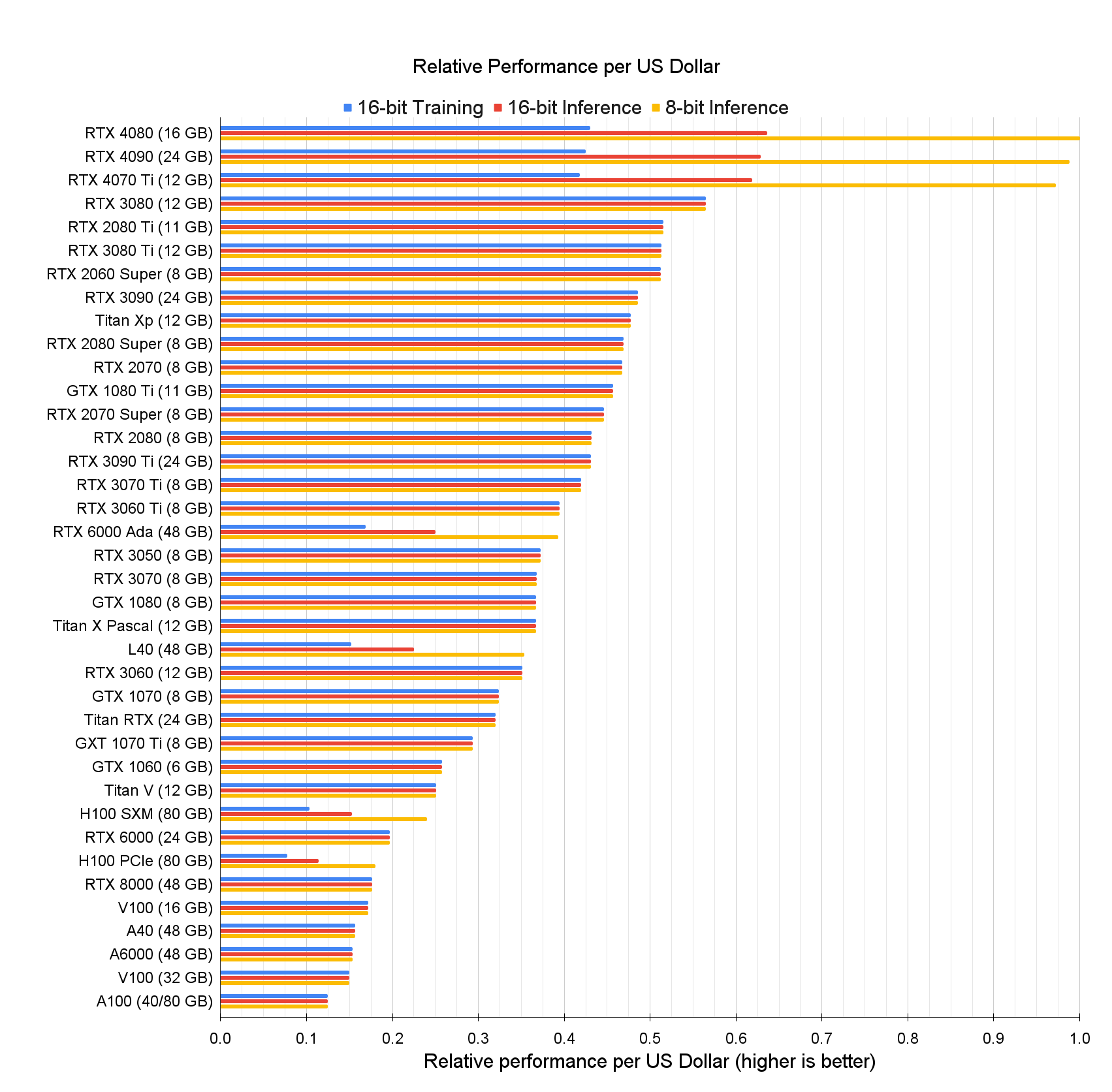

Many companies have placed great importance in investing in GPUs and specialized hardware for deep learning, which is responsible for technology such as digital assistants, facial recognition, and product recommendation systems. Before the new developments, it was believed that in order to speed up deep learning technology, the use of this specialized acceleration hardware was required. One of the biggest challenges within artificial intelligence (AI) surrounds specialized acceleration hardware such as graphics processing units (GPUs). The results were presented at the Austin Convention Center, which holds the machine learning systems conference MLSys. The new algorithm is called “sub-linear deep learning engine” (SLIDE), and it uses general-purpose central processing units (CPUs) without specialized acceleration hardware. Even though the suggested GPU is not as good as google GPU Tesla P100, I can at least train it more than 24 hours.Computer scientists from Rice University, along with collaborators from Intel, have developed a more cost-efficient alternative to GPU. I have been training model on satellite images with 720p or above and Japanese anime coloring that really requires a good GPU. I am not that rich, but I hope I can do an intermediate research level or maybe training NPL like BERT(Maybe).įor the cloud, many people say using google could for one month or one day can cost 100 dollars. I have been google searching online on this question, but most of them suggest buying an RTX like 700-1000 US dollars. I can afford like 100 to 550 US dollars (350 is the best) for GPU or TPU.įor CPU, I will use AMD 3300X or below since CPU is not really a big deal in deep learning.įor Nvidia, I feel most of their GPUs are about gamming rather have an affordable TPU or deep learning GPU. I hope I can have a good suggestion since this cost me a lot. Thus, I think the more realistic way is buy a GPU or TPU and build a new desktop for training, but it is not for gaming. If it is unlucky, which I did not save the trained model during 50% of training, I will need to retrain everything again. To me, colab pro only last about 12 hours or 16 hours. In addition, I often need to train something that takes like 24 hours or more. But I feel it is only like 60 to 70% of it.

I have been using google colab pro with their Tesla p100 like 16GB of ram.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed